You need more data quality in CRM!

Insights, Tips and 11 Quality Criteria

for the correct use of your data

In the increasingly important step of digitizing business processes, more and more companies are relying on the introduction of a CRM system networked with the IT landscape. This leads to faster processes, cost savings and improved data storage. This point is very important, because every activity and analysis in CRM is only as good as its data quality allows. Unfortunately, using CRM software is not the end of the story: there are still a few more aspects to consider. Read here what actually constitutes good data quality and how you can achieve it.

Table of Contents

What is data quality in CRM and why is it important?

Data quality indicates how your data is suitable for its intended use. This is also referred to as “fitness for use“. As you can see from this definition, the assessment of data quality is therefore quite context-dependent. For one purpose, one record can be wonderful, but not for another.

Data quality is especially important where data is used as a resource. So, among other things, in your CRM system but also in networked systems. In your company, these may include the following areas:

- extensive analyses á la business analytics

- Business Intelligence

- Dashboards and Reports

- 360° view of your customers

- Newsletter dispatch

- Production processes

- Invoicing

are dependent on underlying data collections in the age of digitization. Low data quality can lead to costly consequences.

The Rule of Ten applies to data quality in CRM:

- The introduction of an IT solution that provides clean data when you type costs 1€ per record.

- 10€ per data set costs to perform data adjustments at defined intervals.

- €100 per record costs nothing: returners, missed sales opportunities, low productivity.

Loosely based on the adage “garbage in, garbage out,” we can assume that the best algorithm won’t do you much good if you don’t feed it the data it needs. If you do it anyway, because too little attention is paid to data quality, analysis and process errors will follow. The longer these go unnoticed and may even be carried forward, the greater the negative consequences.

These can end up being quite different. Bad decisions may be made based on incorrect analysis results. Perhaps processes are running incorrectly or customers contacted by mistake even sue you because you should no longer even be in the system. According to a study by the MIT Sloan Management Review, companies lose around 15-25% of their revenues due to poor data quality. And pretty sure your IT will need some extra effort to iron out any errors that occur.

11 criteria for measuring your data quality

1. Completeness

Is your data complete or are there gaps? For example, are there no gender identification information in your newsletter distribution list that can lead to embarrassing errors in customer contact?

2. Unambiguousness

Are all records uniquely interpretable?

3. Correctness

This is, for example, about plausibility checks for age data. Is someone 120 years old by date of birth? There was a high probability that an error happened.

4. Up-to-dateness

Whether you maintain your data quality or not, over time the data loses its relevance. Think, for example, of relocations, job changes, etc.

5. Consistency

In addition to criteria such as completeness and clarity, consistency is also catebesed. According to the date of birth, your customer is 120, but at age she is 12? There is probably a mistake here.

6. Accuracy

This is more about more extensive analysis than about address databases. It can sometimes make a big difference whether they include 2, 3 or 4 decimal places. However, you should consider where you need great accuracy and where not. Otherwise, you may also run the risk of inflating your data without any need.

7. Freedom of redundancy

This is again close to the question of clarity. Records should not be evaluated twice because this distorts your results. Worse still, it can lead to conflicting interpretations. Duplicates must therefore be avoided or at least removed during cleaning.

8. Relevance

Use only the data that is relevant for your use case. For example, you should be sure that you do not use figures from the previous year for the current quarterly report, or the like.

9. Uniformity

When collecting data, it can happen that inputs deviate from the usual spelling. For example, you will find Cologne, Koeln, KÖLN. This is a hindrance to a meaningful data analysis. Spellings should be consistent. Attention: Classics are also time and currency information here.

10. Reliability

You should be able to understand which sources your data comes from and whether it is reliable. For example, data from public sources often have lower data quality. For data from internal interfaces, you should regularly check their full functionality.

11. Intelligibility

If attribute names or attributes are encoded, they should be translated into intelligible terms for processing. For example, a program encodes sanotations as 1=woman, 2= master, and so on. To avoid errors of understanding, these codes should be decoded again.

Sources of error that reduce your data quality

After considering the criteria for data quality, you can imagine it: The sources of error in data management are manifold. The most common errors occur when employees enter data. This is especially the case if there are multiple departments that have different approaches or expectations of data analysis. In addition, errors often occur during system changes or when merging data from different sources.

Such errors are annoying and produce unreliable results. Last but not least, you will be annoyed by the costs incurred. The longer errors are carried on unnoticed, the higher the costs for subsequent clean-up, re-evaluation, etc.

Avoid mistakes before they are made

Regular data cleansing is usually necessary, but you save resources with every error that does not occur. In this respect, it is worth taking measures to avoid errors in advance.

Here are some examples:

- System integration & interfaces

The comprehensive integration of your IT landscape is an important factor. We’re talking about the Single Source of Truth(or the Single Point of Truth): a source from which the right data is obtained – whether it’s your CRM system or the ERP. Nowadays, it is not even so complex to integrate or link systems that are in the active exchange of data via interfaces. In this way, data exchange is automated and is directly much less prone to errors. - Mandatory

Set mandatory fields in your input masks. This ensures that the information you need for your data processing is in place. You have therefore already ticked off the criterion of completeness. - Automatic testing/filling

In many places it is possible to ensure that the correct format or information is entered in the field by means of automatic checks or fillings as soon as you enter it.

Tip: Last but not least, drop-down menus in input masks are good for narrowing down a selection and avoiding spelling mistakes.

What is data qualitymanagement?

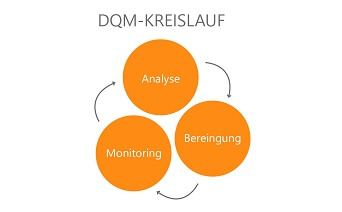

The above measures already belong to the area of data quality management, which is responsible for the strategic management of the data and the sustainable protection of data quality in CRM. For data errors, which nevertheless creep in every now and then or occur due to time, there are also some tasks.

By now, you are sure you are aware of how important good data quality in CRM is for your business. And you have probably also been able to determine that it is no small feat to ensure high data quality on a permanent basis. Data quality management should therefore find a permanent place in your in-house data culture.

What does your data quality have to be in CRM?

Despite the high importance of data quality, it can be said quite bluntly that it is almost impossible to achieve 100% data quality in CRM. In addition, the required data quality is always dependent on the application context and the existing errors. However, if you are really aware of this and take the appropriate measures, you can be confident that your data quality in CRM will be sufficient for your purpose. Because then you can define what data you need, what purpose it will serve and therefore what errors you need to exclude for it. Once that is done, your data quality should give you the reliable and meaningful results you want from CRM software.

Conclusion

Surely you can now also confirm on your own that you need more data quality in your CRM. Always keep in mind that you need to be clear about the goals of your data processing and the possible errors to enable a high quality of your data. Also, never forget that this is an ongoing task and always keep an eye on your data quality in CRM.